Bvlgari Omnia Crystalline eau de toilette 40 ml + body lotion 40 ml + shower gel 40 ml, gift set - VMD parfumerie - drogerie

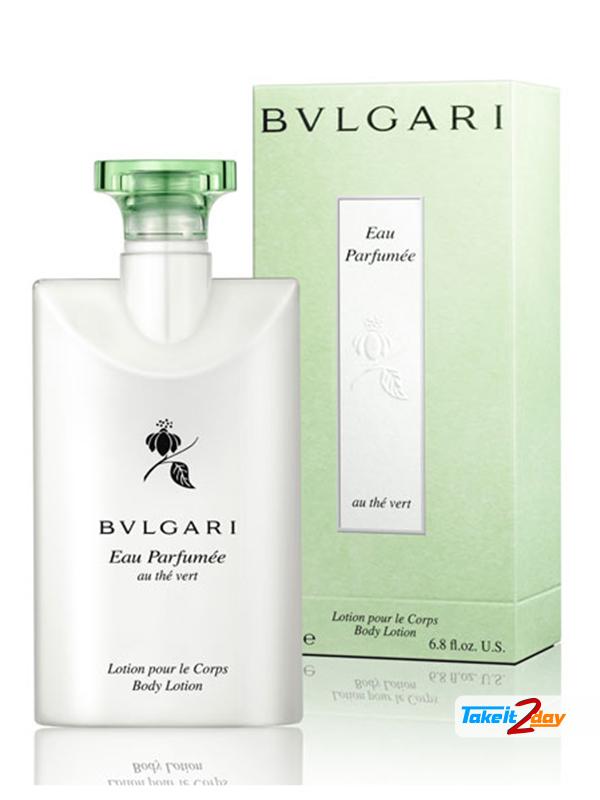

Amazon.com : Bvlgari Au The Vert (Green Tea) Lotion - Set of 3, 2.5 Fluid Ounces Bottles : Beauty & Personal Care

BVLGARI Eau Parfumée Au Thé Blanc Body Lotion (40 ml), Beauty & Personal Care, Fragrance & Deodorants on Carousell

Bvlgari Omnia Crystalline Lotion pour le Corps Body Lotion: Review - Budget Belleza | Indian Beauty Blog | Makeup Looks | Product Reviews | Brands | Swatches