This article was published as part of the Data Science Blogathon

In today's technology driven world, every second a large amount of data is generated. Constant monitoring and correct analysis of such data are necessary to obtain meaningful and useful information..

Real time Sensor data, IoT devices, log files, social media, etc. must be closely monitored and processed immediately. Therefore, for real-time data analysis, we need a highly scalable data transmission engine, reliable and fault tolerant.

Data transmission

Data streaming is a way of continuously collecting data in real time from multiple data sources in the form of data streams.. You can think of Datastream as a table that is continually aggregated.

Data transmission is essential to handle massive amounts of live data. Such data can come from a variety of sources such as online transactions, log files, sensors, player activities in the game, etc.

There are several techniques for transmitting data in real time, What Apache KafkaApache Kafka is a distributed messaging platform designed to handle real-time data streams. Originally developed by LinkedIn, Offers high availability and scalability, making it a popular choice for applications that require processing large volumes of data. Kafka allows developers to publish, Subscribe and store event logs, facilitating system integration and real-time analytics...., Spark Streaming, Apache FlumeFlume is an open-source software designed for data collection and transport. Use a flow-based approach, allowing data to be moved from various sources to storage systems such as Hadoop. Its modular and scalable architecture makes it easy to integrate with multiple data sources, which makes it a valuable tool for the processing and analysis of large volumes of information in real time.... etc. In this post, we will discuss data transmission using Spark Streaming.

Spark Streaming

Spark Streaming is an integral part of API central de Spark to perform real-time data analysis. It allows us to build a scalable live streaming application, high performance and fault tolerant.

Spark Streaming supports real-time data processing from various input sources and storing the processed data on various output receivers.

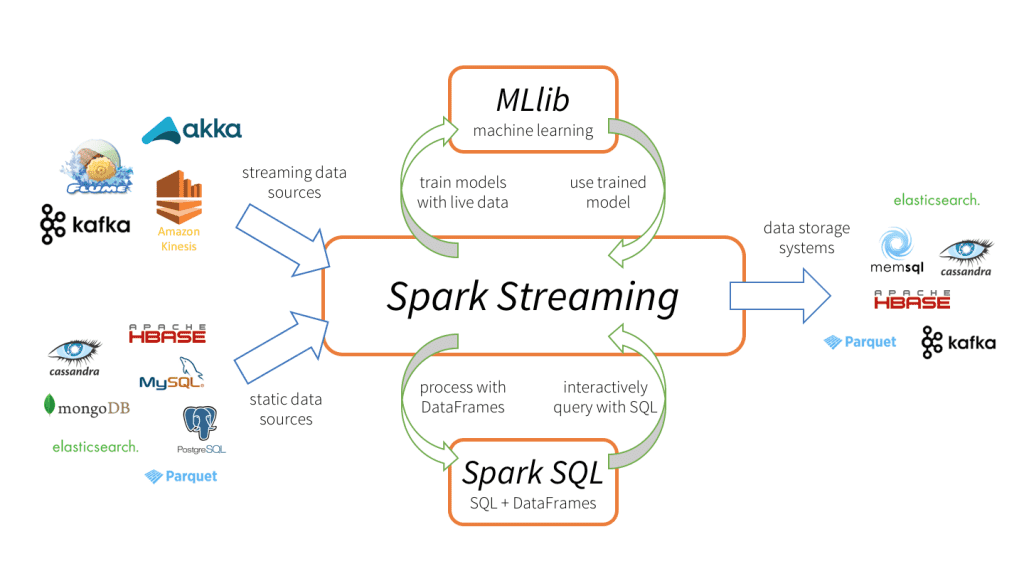

Spark Streaming tiene 3 main components, as shown in the picture above.

-

Input data sources: Streaming data sources (as Kafka, Flume, Kinesis, etc.), static data sources (like MySQL, MongoDB, Cassandra, etc.), sockets TCP, Twitter, etc.

-

Motor Spark Streaming: To process incoming data using various built-in functions, complex algorithms. What's more, we can check live broadcasts, apply machine learning using Spark SQL and MLlib respectively.

-

Outlet sinks: Processed data can be stored in file systems, databases (relational and NoSQL), live panels, etc.

Such unique data processing capabilities, input and output format do Spark Streaming more attractive, leading to rapid adoption.

Advantages of Spark Streaming

-

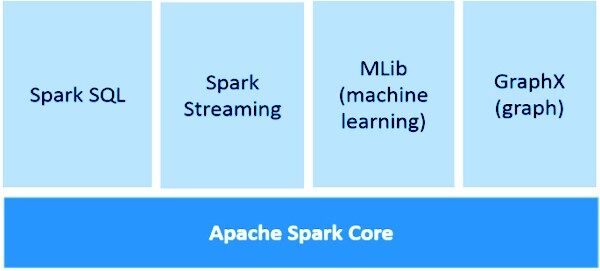

Unified transmission framework for all data processing tasks (including machine learning, graphics processing, SQL operations) in live data streams.

-

Dynamic load balancing and better resource management by efficiently balancing the workload between workers and launching the task in parallel.

-

Deeply integrated with advanced processing libraries like Spark SQL, MLlib, GraphX.

-

Faster recovery from failures by restarting failed tasks in parallel on other free nodes.

Spark Streaming Basics

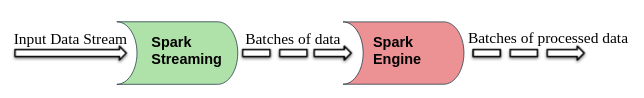

Spark Streaming splits the live input data streams into batches which are then processed by Spark engine.

DStream (discretized flow)

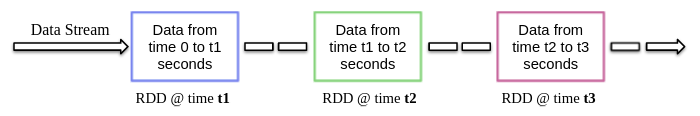

DStream is a high-level abstraction provided by Spark Streaming, basically, means the continuous flow of data. Can be created from streaming data sources (as Kafka, Flume, Kinesis, etc.) or performing high-level operations on other DStreams.

Internally, DStream is an RDD stream and this phenomenon allows Spark Streaming to integrate with other Spark components such as MLlib, GraphX, etc.

When creating a streaming app, we also need to specify the batch duration to create new batches at regular time intervals. Normally, the duration of the batch varies from 500 ms to several seconds. For instance, son 3 seconds, then the input data is collected every 3 seconds.

Spark Streaming allows you to write code in popular programming languages like Python, Scala and Java. Let's take a look at a sample streaming app that uses PySpark.

Sample app

As we discussed earlier, Spark Streaming it also allows to receive data streams through TCP sockets. So let's write a simple streaming program to receive text streams on a particular port, perform basic text cleanup (how to remove whitespace, stop word removal, lematización, etc.) and print the clean text on the screen.

Now let's start implementing this by following the steps below.

1. Creating a context for transmitting and receiving data streams

StreamingContext is the main entry point for any streaming application. It can be created by instantiating StreamingContext kind of pyspark.streaming module.

from pyspark import SparkContext from pyspark.streaming import StreamingContext

While creating StreamingContext we can specify the duration of the batch, for instance, here the duration of the batch is 3 seconds.

sc = SparkContext(appName = "Text Cleaning") strc = StreamingContext(sc, 3)

Once the StreamingContext it is created, we can start receiving data in the form of DStream through TCP protocol on a specific port. For instance, here the hostname is specified as “localhost” and the port used is 8084.

text_data = strc.socketTextStream("localhost", 8084)

2. Performing operations on data streams

After creating a DStream object, we can perform operations on it as per requirement. Here, we wrote a custom text cleanup function.

This function first converts the input text to lowercase, then remove extra spaces, non-alphanumeric characters, links / URL, stopwords and then further stemming the text using the NLTK library.

import re

from nltk.corpus import stopwords

stop_words = set(stopwords.words('english'))

from nltk.stem import WordNetLemmatizer

lemmatizer = WordNetLemmatizer()

def clean_text(sentence):

sentence = sentence.lower()

sentence = re.sub("s+"," ", sentence)

sentence = re.sub("W"," ", sentence)

sentence = re.sub(r"httpS+", "", sentence)

sentence=" ".join(word for word in sentence.split() if word not in stop_words)

sentence = [lemmatizer.lemmatize(token, "v") for token in sentence.split()]

sentence = " ".join(sentence)

return sentence.strip()

3. Start streaming service

The streaming service has not started yet. Use the start() function at the top of the StreamingContext object to start it and continue to receive transmission data until the termination command (Ctrl + C o Ctrl + WITH) not be received by awaitTermination () function.

strc.start() strc.awaitTermination()

NOTE – The complete code can be downloaded from here.

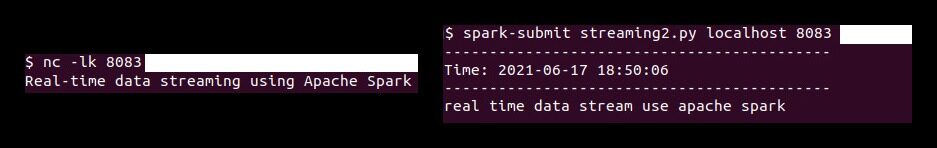

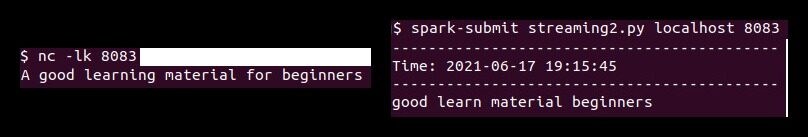

Now first we have to run the ‘North Carolina'command (Netcat Utility) to send the text data from the data server to the Spark streaming server. Netcat is a small utility available on Unix-like systems to read and write to network connections through TCP or UDP ports. Your two main options are:

-

-l: Let North Carolina to listen for an incoming connection instead of initiating a connection to a remote host.

-

-k: Cash North Carolina to stay tuned for another connection after the current connection completes.

So run the following North Carolina command in terminal.

nc -lk 8083

Similarly, run the pyspark script in a different terminal using the following command to perform text cleaning on the received data.

spark-submit streaming.py localhost 8083

According to this demonstration, any text typed in the terminal (running netcat server) will be cleaned and the cleaned text will be printed on another terminal every 3 seconds (batch duration).

Final notes

In this article, we discussed Spark Streaming, your benefits in real-time data transmission and a sample application (utilizando sockets TCP) to receive the live data streams and process them as per requirement.

Media shown in this article about implementing machine learning models that take advantage of CherryPy and Docker is not the property of DataPeaker and is used at the author's discretion.